IBM is a very interesting player in the Open Source ecosystem and in my opinion- The Best. They understand how it works and also how to leverage it to their business goals. To their customers they are the trusted business partner and certainly portray themselves as open and flexible. They are very smart about where to contribute to get influence in open source and what/how to consume that meets their business objective. And the wonderful thing is that they had been able to pull this off by not ruffling many feathers in the community.

In the changed software landscape of open source the core competency is not “ S/W features” but “Speed” - Speed by which a firm can leverage external innovations not by copying everything but by quickly assembling products from proprietary and open components.

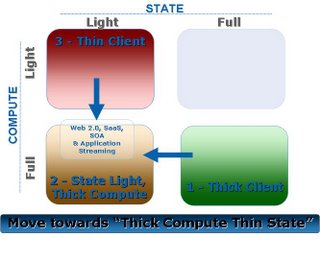

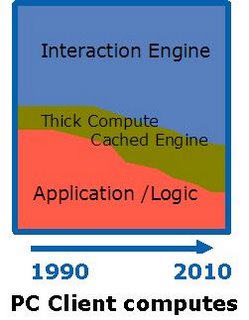

In my opinion IBM believes that in the long run all software is going to be free and open and hence does not have much value in itself. But the trick is to extract as much value as possible during the journey to the end state. And to do that they leverage "Pluggable Integration Architecture" a “Lego blocks” type approach that can accommodate both proprietary and open source components. Pluggable Integration Architecture are the new influence points and hence allow “opening” their existing S/W product portfolio in increments and on their terms.

This totally changes the competitive landscape, now if market environment changes either from competitive pressure or by availability of better open source components; IBM now has a mechanism to respond fast. IBM can very easily slot in components from open source (like Apache httpd) and also commoditize components when it sees competitive threats (like modeling tools). By getting industry to adopt open integration framework they have a ready channel to slot in proprietary pieces on top of open pieces and IBM is in position to extract value on the road towards "Total Commoditization".

market environment changes either from competitive pressure or by availability of better open source components; IBM now has a mechanism to respond fast. IBM can very easily slot in components from open source (like Apache httpd) and also commoditize components when it sees competitive threats (like modeling tools). By getting industry to adopt open integration framework they have a ready channel to slot in proprietary pieces on top of open pieces and IBM is in position to extract value on the road towards "Total Commoditization".

And regarding Java, I believe very soon IBM will get over the gloom and then it will embrace it to make it yet another Lego block in the puzzle.